[#86] The Agentic Commerce Stack (Part 1): Works for low-risk flows, not for secure scale (yet)

The happy path in agentic and A2A commerce works. What’s still unsolved is the unhappy path, when things break, and data or credentials leak. To scale this, privacy and trust layers are needed

The AI industry has spent the last two years building agents that can reason, use tools, and talk to each other. Google’s A2A lets agents communicate. Anthropic’s MCP lets them access tools. Payment protocols are emerging so agents can transact autonomously. But there’s a problem nobody has cleanly solved: when Agent A from Company X collaborates with Agent B from Company Y, and the task requires sharing sensitive data such as: transaction amounts, account identifiers, treasury positions, then who decides what gets shared, how it’s protected, and how you prove it after the collaboration has happened?

Today, the answer is: nobody. Or worse, each company rolls its own solution, hardcodes privacy rules into the foundation, and hopes policy and trust is enough. And that is what I’ve tried to unpack in this piece - for agentic workflows, and money movement to scale in general, what needs to happen? Put very simply, we need different data architecture.

The big problem with the entire agentic workflow, is that while the ‘happy flows’ work, there is still a lack of risk and trust layers in the system, which prevents this from actually scaling.

If everything works as intended, agentic workflows can run smoothly. But the real question isn’t whether they work, it’s whether they can be trusted at scale. Fraud prevention, adversarial attacks, credential theft, and data leakage are still evolving areas. Until these are solved with confidence, enterprise adoption will remain limited.

The first issue is that many risks are still unknown. Most systems today are built on assumptions and first principles thinking, not problems that have surfaced at scale. And for those who have built systems, you know that vulnerabilities don’t show up in design, they show up at scale, under adversarial conditions. Put simply, we don’t yet know what failure looks like in fully agentic systems. Problems need to emerge before they can be understood and fixed, which means current safeguards are largely unproven.

The second issue is that the fixes require structural change. Existing systems were built for humans in the loop. Example: authentication assumes human intent and confirmation, authorization assumes explicit actions taken by a human again (either in real time or via a pre-set mandate). Agentic systems break these assumptions. Agents act autonomously, decisions are multi step, and intent is inferred, and passed on from agent to agent. Solving this requires rethinking identity, authentication, and authorization, not just patching a layer on, and calling it “agent access.”

These kinds of changes rarely happen proactively. They usually follow a major failure: fraud, exploits, or regulatory pressure, or require strong conviction to build ahead of time. Both are slow paths, and usually have a big $ amount attached to them.

The big chicken and egg problem today is that security systems are typically reactive. Checks and balances emerge after something breaks, not before. Until then, they’re seen as over-engineering. It is hard to justify a costly project today, and attach a dollar value to it, unless there has actually been some sort of breach or fraud that has happened. And in agentic systems, this is amplified. The access that the agent needs - to tools, to credentials, to data, context and memory amplifies how well it executes. But, while everyone is talking about how agents are the future, the risk / fraud is not very clear. The attack surface is large, but undefined.

So the blocker isn’t capability: it’s trust. Adoption will stay controlled until systems can not just execute workflows, but contain failures and prove they’re secure.

I am bullish on agentic payments and workflows globally (not India). The problem is, that giving an entity governed by a non-deterministic model, authority to access services, and move money on your behalf can blow up on your face really badly, unless the checks and balances are rock solid.

You can read some of my past articles below:

[#80] Google’s UCP & AP2: Moving agentic commerce from just “plausible” to “scalable”

[#83] The AI Money Movement Layer (Part 2): 5 Blueprints Built on the Same Core Principles

TLDR, a lot has evolved in agentic payments over the last year.

Almost every major fintech network, global player, and platform with meaningful merchant scale has launched its own version of an agentic payment protocol. These protocols define three core things: how agents are identified, how they initiate payments, and how those payments are authorized, whether it is via mandates, human-in-the-loop auth (PINs, biometrics), or fully autonomous flows (like Stripe’s ACP, and SPT). And underneath all of this sits the settlement layer, where these workflows ultimately connect to banks or blockchains to move funds. Broadly, these systems are converging around two models:

1. Agentic workflows for human commerce (where the human consumes the good or service)

A pre-set up mandate that the agent can access (UPI reserve pay, and Google’s AP2 offers this functionality)

A real time flow, but with humans in the loop (Cashfree x OpenAI, where the agent platform initiates the payment, but the human is required for biometric authentication. IMO, this is the model India will have to follow with RBI’s 2FA mandates for payment, and the new regulations that rolled out in April 2026, which require one factor out of the 2 to be dynamic).

A real time autonomous flow, like Stripe with its ACP. In this case, the agent creates a tightly bound context token by calling Stripe APIs, and sends it to the merchant. The merchant checks the token by calling Stripe APIs again, and validates that this token is the matched amount, merchant, and so on. Once validated, then Stripe charges the users saved card.

2. Agentic workflows for machine commerce (Where the consumer is another machine/agent - ex paid APIs)

This basically has two models.

Two dominant approaches have emerged:

Stripe MPP (Machine Payments Protocol): centralized, supports both fiat and crypto. Built on HTTP primitives (more specifically the HTTP 402 status code for payments)

Coinbase x402: decentralized, crypto-native, built on HTTP 402 Notably, x402 has already seen meaningful traction, with ~75M transactions and ~$24M volume in the last 30 days, which suggests real demand for machine native payment flows. In fact, Coinbase recently donated x402 to the Linux Foundation as an open source protocol.

Ecosystem players are actively betting on this, across both human and machine commerce. And from a first-principles perspective, it makes sense: if workflows become autonomous, payments have to become programmable and embedded within them. So then, will agentic payments reshape payments? I’d say, yes. We’re already seeing early signs of scale. But enterprise adoption is a different story. Individuals may experiment and adopt faster, but for enterprises, a lot still needs to be figured out, especially around trust, security, and control.

The IMF published a paper on how Agentic AI will reshape payments, and entire AI workflows actually

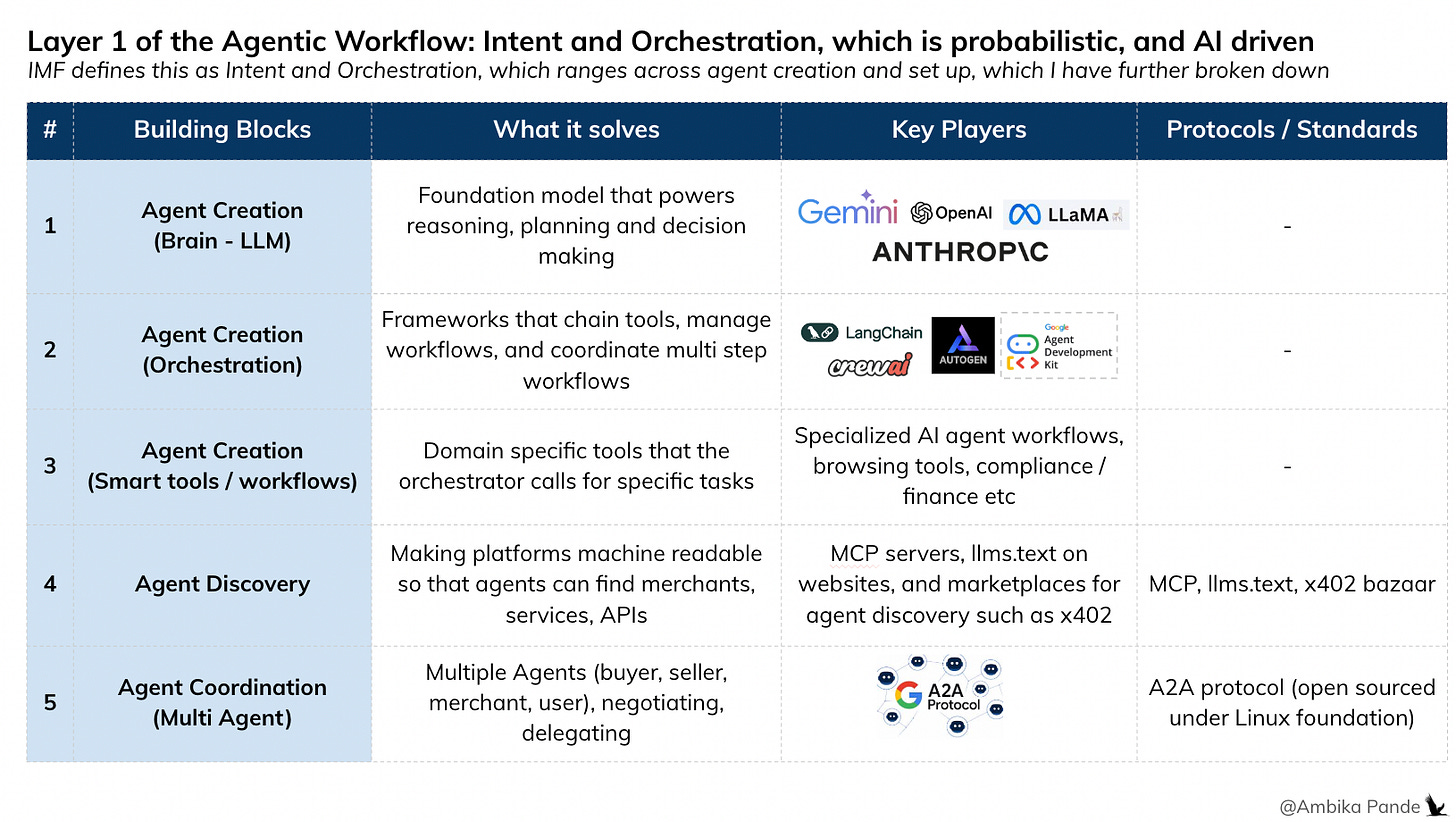

The International Monetary Fund (IMF) published a paper on how Agentic AI will reshape payments. A lot of it aligns with how I’ve been thinking about it. They break the stack into three layers. Building on that, here’s a more detailed view:

Layer 1: Agent Intent and Orchestration

🟢 Status: Largely solved.

This layer is about translating high-level user intent into structured, machine-readable workflows. It includes reasoning, planning, and coordination, but not execution or authorization. So, it is all about choosing the foundation model that powers the workflow, enabling agent discovery, and enabling agentic communication. I’d further break this into 3 sections:

1️⃣ Agent Creation: How do you create an agent and define what it can do?

Foundation models (ex: Claude, ChatGPT) power reasoning, aka The Brain

Frameworks like LangChain, Google ADK, CrewAI handle orchestration

Smart tools and workflows: This could be specific agents for specific workflows (example later, but could be agents with specific tasks like onboarding, email response etc)

2️⃣ Agent discovery: How do agents find tools, APIs, or other agents?

MCP (Model Context Protocol): Standardized way to expose merchant APIs to LLMs and agents

llms.text: A simple way to think about it is - a plain text file at the root of your website (yoursite.com/llms.txt) that tells AI agents: “Here’s everything important about my site, in a format you can actually read.”

Marketplaces for agent discovery: Bazaar by x402 it enables discovery of all x402 enabled services. In x402 v2, the Bazaar has been codified as an official extension in the reference x402 SDK

3️⃣ Agent coordination: Once discovered, how do agents communicate? Protocols like Google’s A2A enable agent-to-agent interaction and coordination

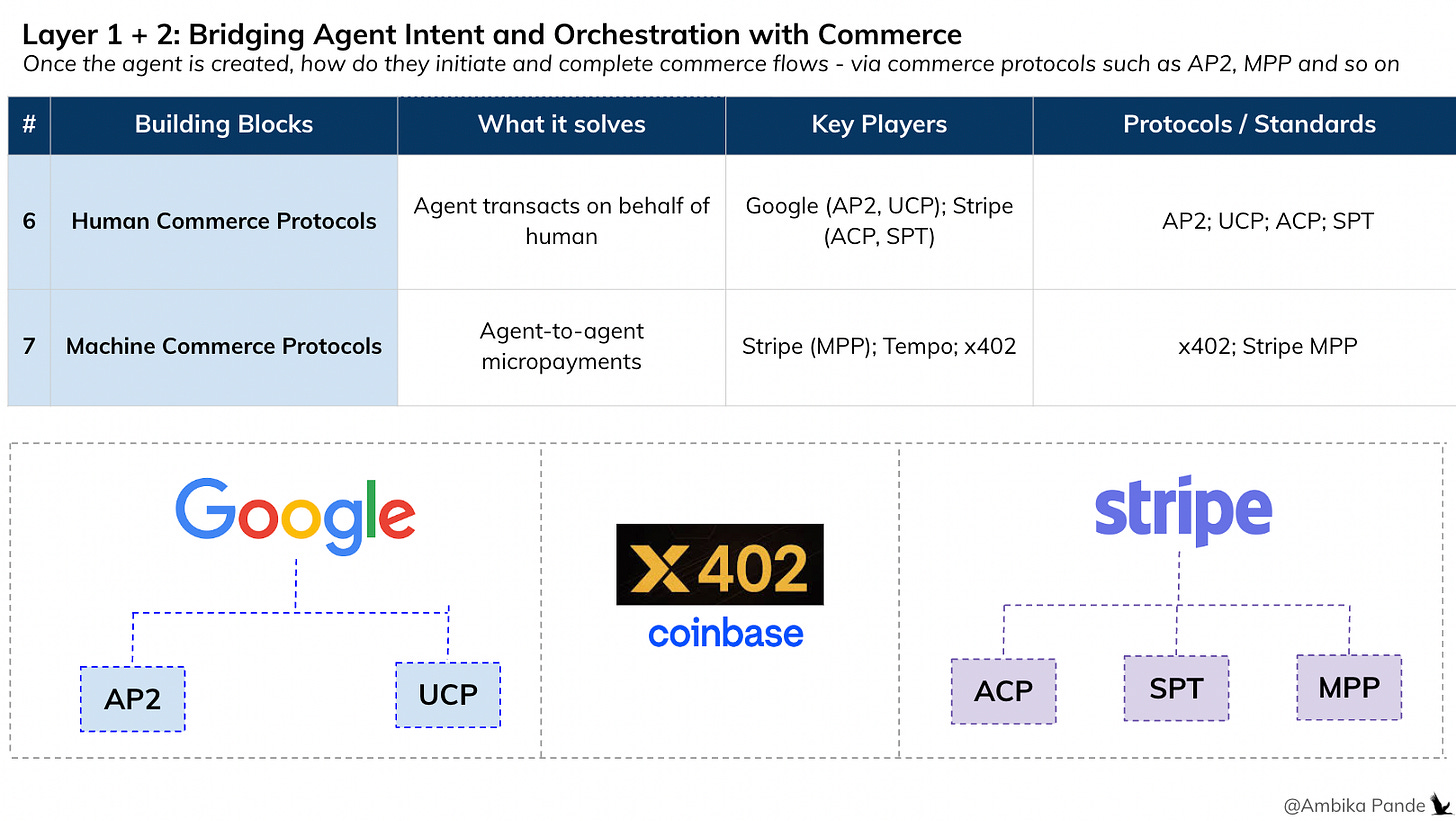

Layer 2: Bridging Agent Intent and Orchestration with Commerce

🟢 Again, largely solved

Once an agent can reason and plan, the next question is: can it actually initiate and authorize payments? This layer connects intent to real world commerce. It defines how agents interact with payment systems. This splits into two models:

1️⃣ Human Commerce: This is where some of the agentic commerce protocols come in. Google’s AP2 + UCP, which is a unified stack for commerce - allowing mandate creation so that the agent can use that authority to okay a payment. UCP, which is a standard protocol allowing to standardize across payment states. Stripe’s ACP + SPT - again, these are protocols that allow agentic payments to happen.

2️⃣ Machine Commerce: Agents buying machine consumable services. Stripe MPP, Tempo, x402, which enable micro-payments by agents

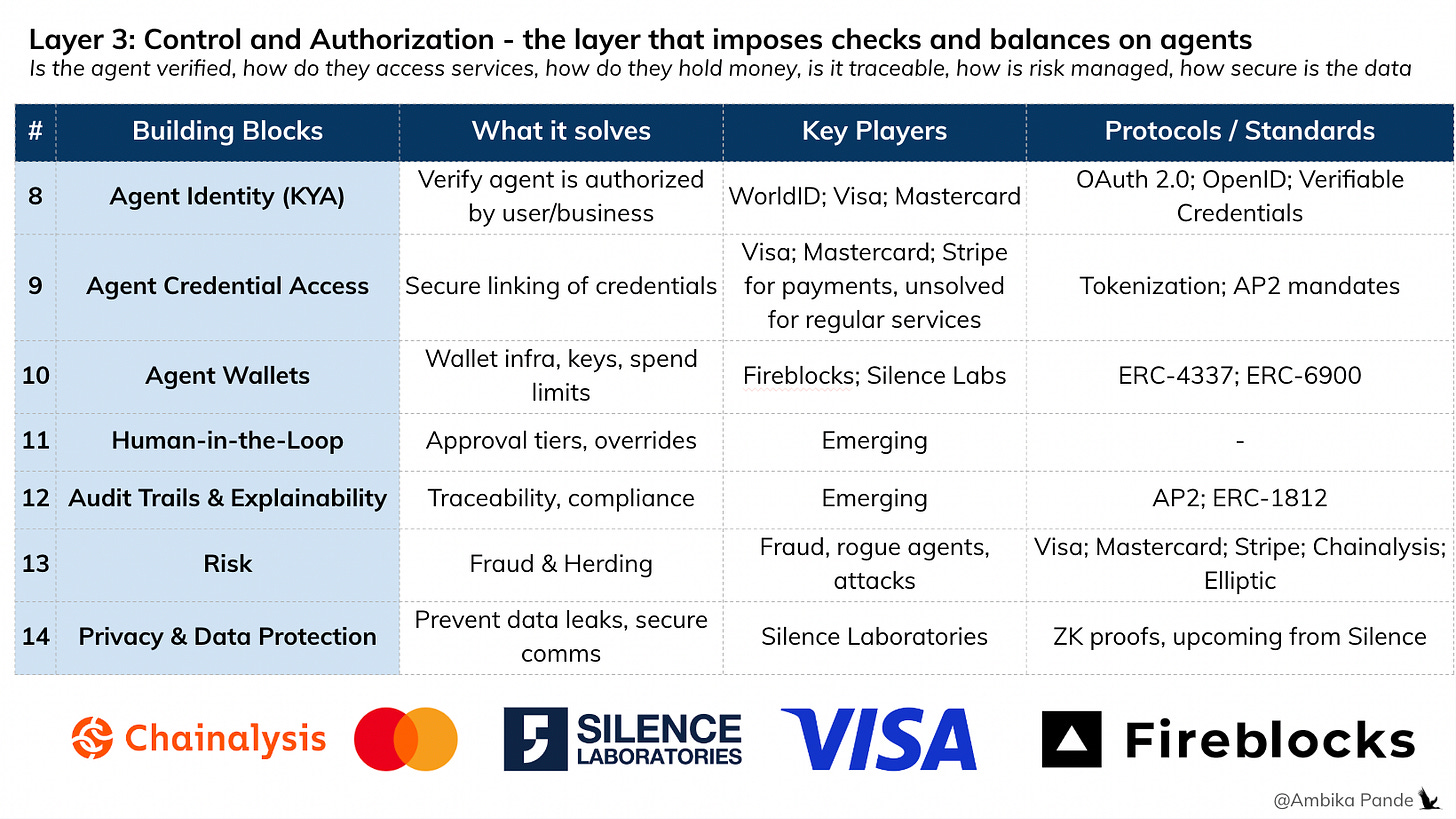

Layer 3: Control and Authorization - guardrails around what an agent is allowed to access and do

🟡 Partially solved, but critical gaps remain

This is the layer that determines whether an agent should be allowed to act. The IMF paper puts this really well. To quote:

“This layer enforces deterministic constraints that govern whether actions proposed or initiated by agents may proceed toward execution. Technologies in this layer ensure that authorization decisions are ultimately governed by deterministic policy rules, even when informed by upstream probabilistic systems. This domain focuses on protocols that shift trust from human oversight to technical safeguards through verifiable claims, authorization constraints, and identity frameworks”

To put it more simply:

Layer 1 is probabilistic (agents think),

Layer 2 is execution bridging (turning intent into payment-ready actions)

Layer 3 is deterministic (systems decide if after all the planning and translation, are agents allowed to act).

This layer translates intent into permissioned execution, ensuring that actions comply with identity, policy, and risk constraints before money moves. Breaking it down further:

1️⃣ Agent identity: How does a counterparty know an agent is legitimately acting on behalf of a user or business?

WorldID (founded by Sam Altman by the way) is building in this space

Visa, Mastercard are building here, but they obviously have a huge advantage since they have close to ~8B cards combined between them, issued. These are cards that have users linked to them, which already have KYC done by the issuing bank. So this is an easy way to prove agent identity - link their card credentials to the agent

2️⃣ Agent Credential Access: Once verified, can the agent safely access credentials needed to act? In my opinion, this is a major unsolved risk today.

Today’s model is that a single credential, or token unlocks access. if the credential or token is stolen, then there is not too much that can be done, apart from revoking or deleting it. Policy engines today govern what the agent does, NOT what the credential can be used for. And if credentials are stolen, attackers don’t just read data, they can execute financial actions autonomously. Now, while are solutions today that encrypt the token (AWS Sig4), Aembit etc, but the problem still remains the same - there is a single source of truth.

This risk already exists today, but agentic systems amplify it: More agents → more credentials → larger attack surface vs the current human in the loop world.

3️⃣ Agent Wallets + Spending Policies: This is relatively mature. This includes things such as wallet infra (custodial/non-custodial), policy engines (limits, thresholds, approvals), and MPC / threshold signatures. Players like Fireblocks and Silence Laboratories are building here. Human-in-the-loop controls (ex: approvals, 2FA) also sit here as part of policy enforcement.

4️⃣ Audit trails and explainability: Can every action be traced, verified, and justified?

In human payments, this is usually mandates, or transaction logs. In agentic systems, this evolves into: cryptographic audit trails, signed execution chains, and verifiable proofs of policy compliance. This becomes critical for compliance, disputes, and regulation.

5️⃣ Risk and Fraud: How do you protect against rogue agents, fake merchants, prompt injections, and man in the middle attacks? While some foundations in payment systems: Visa, Mastercard are building here for agent authentication and verification. Stripe has Stripe Radar for fiat payments, that can probably be used for agent payments as well. But this is still early. Fraud patterns in agentic systems are not fully understood yet, this will evolve with real-world usage.

6️⃣ Privacy and Data: Agents exchange large amounts of contextual data, creating new leakage risks. Even with guardrails: LLMs are non deterministic, and prompt injection can bypass constraints (the “grandma exploit”). Emerging approaches:

Shared context in 'a clean room’ type environment. This paper by Outshift (by Cisco) talks a concept called the “Internet of Cognition” which elaborates about how to share goals between cross organization agents, and enable secure computation on private data.

Encrypted computation (MPC, enclaves): How do you enable context sharing and computation without actually sharing the data?

Zero knowledge proofs (for transaction validation without exposure)

Start-ups like Silence Laboratories are solving this via encryption protocols but again, this is an emerging space.

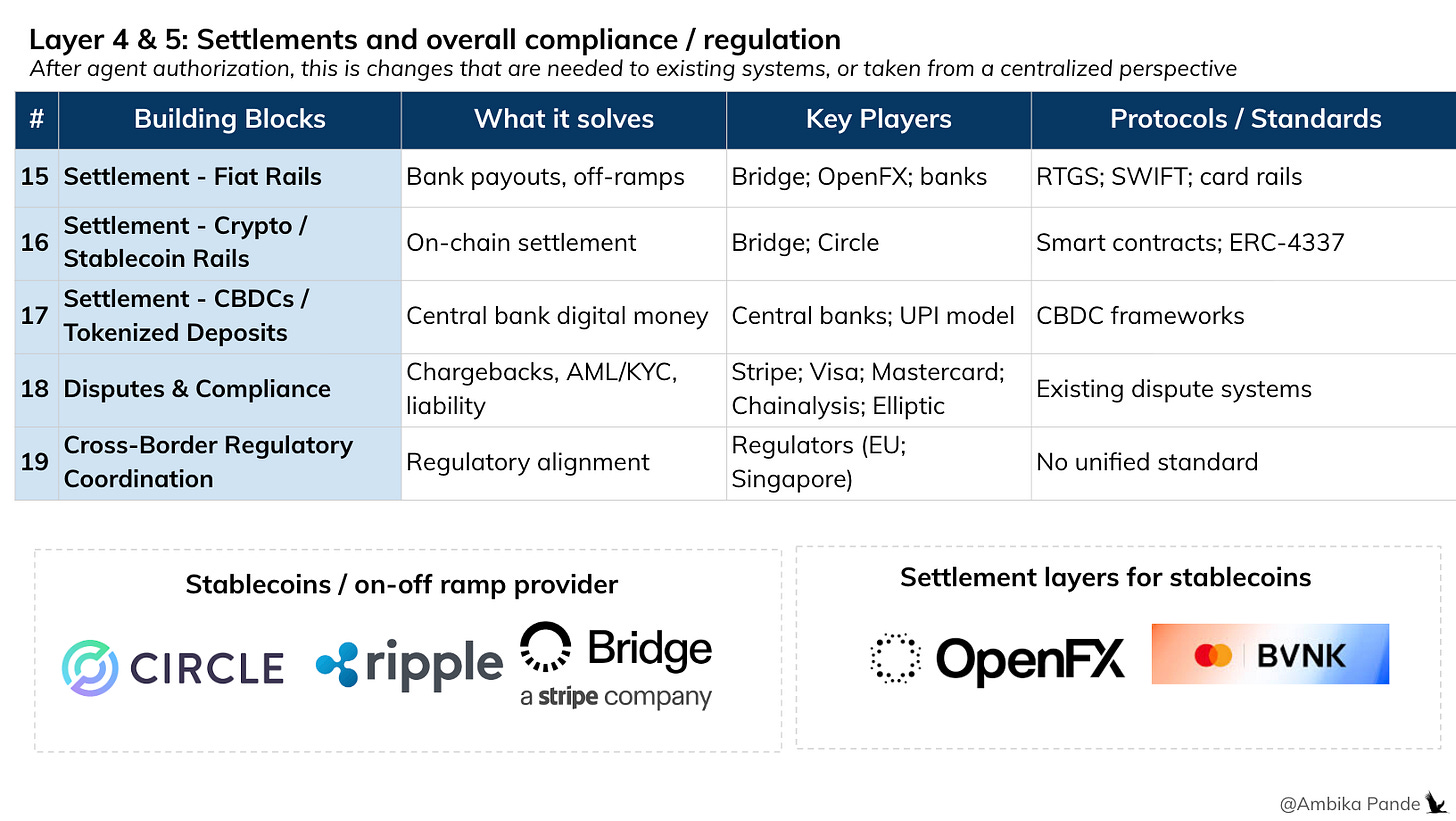

Layer 4 & 5: Settlement and cross functional risk and compliance checks

🟢 Largely solved (with evolution underway)

👉 Layer 4: Settlement (execution of value transfer)

This is where money actually moves, across banking rails, cards, or crypto networks. At this layer, the system ensures that once an action is approved, funds are reliably transferred.

On/off-ramps like Bridge handle conversion between crypto and fiat

Full-stack setups integrate with licensed payout partners for last-mile settlement

Players like OpenFX are building abstractions for cross, border flows

👉 Layer 5: Risk, Disputes & Compliance (post-execution control + audit)

This is where the ecosystem ensures transactions are legitimate, reversible (if needed), and compliant.

Networks like Stripe, Visa, and Mastercard handle dispute resolution and chargebacks

Risk and compliance tooling from Chainalysis, Elliptic enable fraud detection, AML checks, and transaction monitoring

Conceptually, this layer doesn’t change dramatically from today, but it needs to adapt to, higher transaction velocity (agents acting continuously), new fraud surfaces (autonomous decisioning), stronger auditability requirements (agent-driven actions need traceability, usually some sort of cryptographic proof which is tamper proof or tamper. evident)

TLDR: The stack is coming together, from intent, to execution, to settlement. But the real friction isn’t at the layer level. It’s inside the workflow.

In Part 2, I’ll break down what an actual agentic workflow looks like today, and where the biggest gaps around data, privacy, and auditability start to show up, and some ways to execute this.

Because until those are solved, this doesn’t become enterprise infrastructure, it remains a powerful, but fragile system that works in demos, not at scale. It’s impressive in isolation, but it breaks in production.